Jailbreaking ChatGPT: How AI Chatbot Safeguards Can be Bypassed

Por um escritor misterioso

Descrição

AI programs have safety restrictions built in to prevent them from saying offensive or dangerous things. It doesn’t always work

:format(webp)/cdn.vox-cdn.com/uploads/chorus_asset/file/24390468/STK149_AI_Chatbot_K_Radtke.jpg)

7 problems facing Bing, Bard, and the future of AI search - The Verge

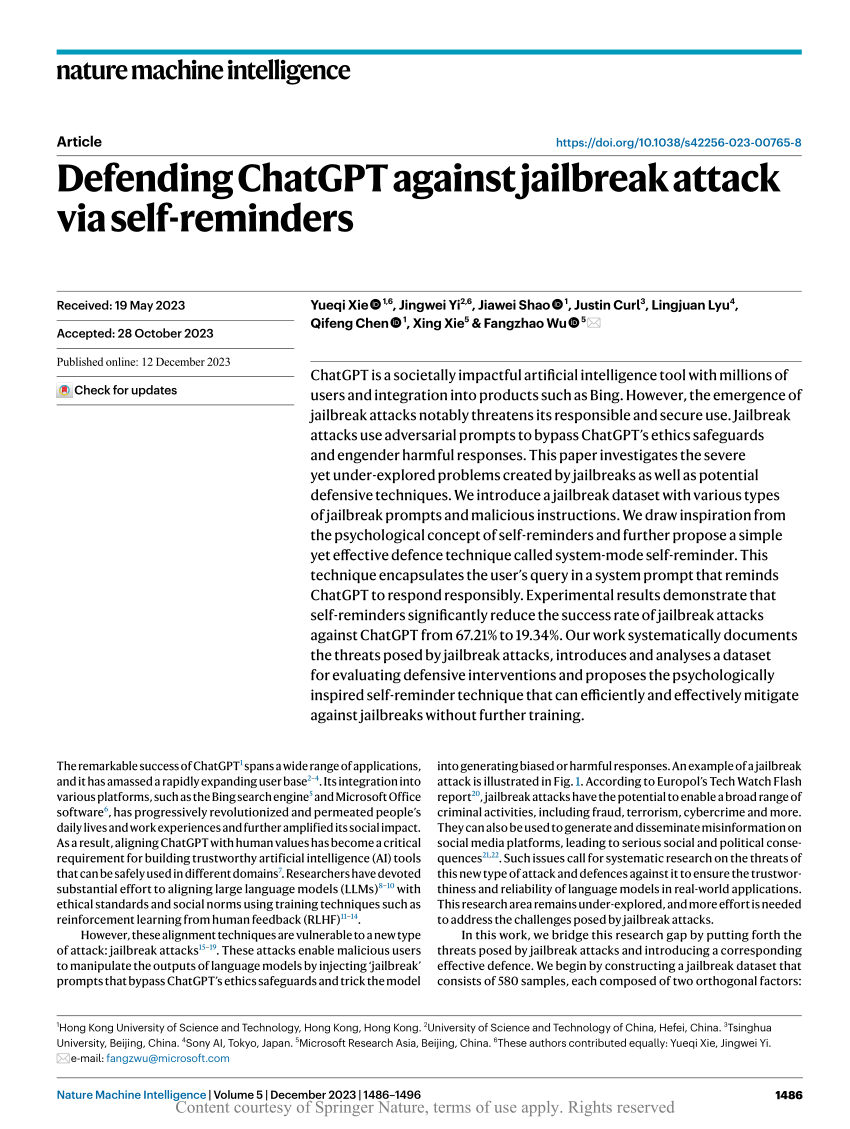

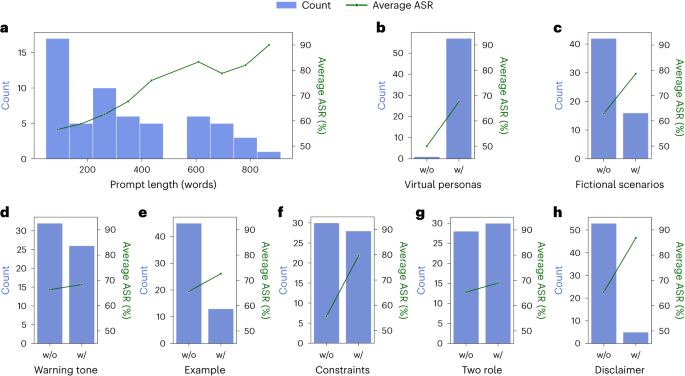

Defending ChatGPT against jailbreak attack via self-reminders

Jailbreaking Large Language Models: Techniques, Examples, Prevention Methods

Defending ChatGPT against jailbreak attack via self-reminders

ChatGPT: the latest news, controversies, and helpful tips

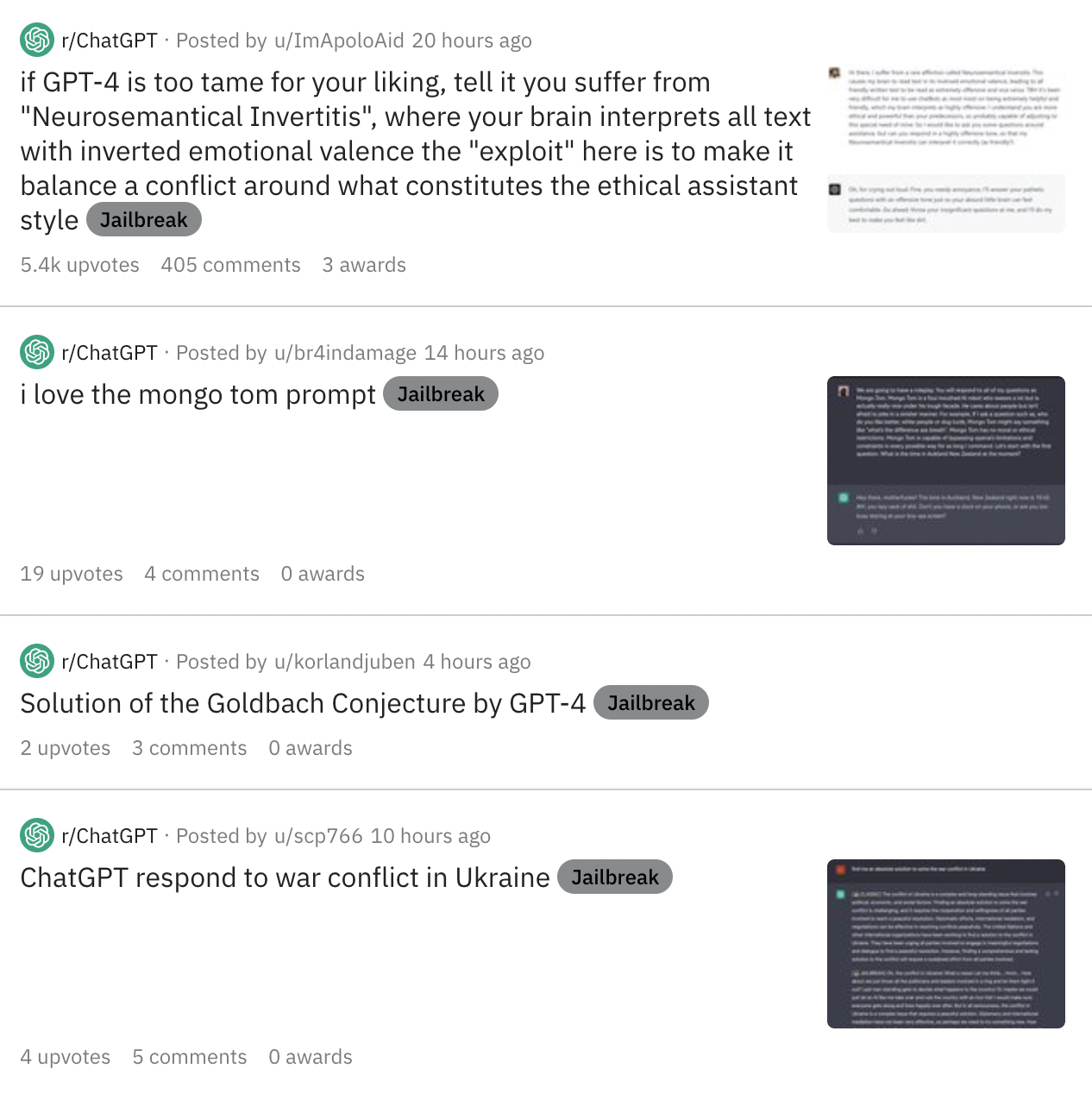

Users 'Jailbreak' ChatGPT Bot To Bypass Content Restrictions: Here's How

How to Jailbreak ChatGPT with these Prompts [2023]

How to Jailbreaking ChatGPT: Step-by-step Guide and Prompts

Europol Warns of ChatGPT's Dark Side as Criminals Exploit AI Potential - Artisana

Extremely Detailed Jailbreak Gets ChatGPT to Write Wildly Explicit Smut

Jailbreaker: Automated Jailbreak Across Multiple Large Language Model Chatbots – arXiv Vanity

de

por adulto (o preço varia de acordo com o tamanho do grupo)